Bibliographic information

1

Introduction: From Looking at Photographs to Images Being Read by Machines First

Photographic theory often begins from a basic scene: the photograph appears first, the human viewer looks afterward, and meaning gradually emerges through attention, return, and interpretation[1-3].

Today, that starting point remains valid, but it is no longer sufficient to describe the actual path through which we encounter photographs. Very often, we do not first see a single photograph; instead, we are first led by systems to look for a class of photographs. If one types sunset into a phone gallery, for example, the system automatically gathers images it judges to match that category. On social platforms, whether a photograph is seen more widely often depends on how the platform has identified and ranked its content in the background. In other words, before an image reaches the human retina, it has usually already passed through a series of computational steps, including segmentation, recognition, caption generation, and distribution decisions.

This article summarizes that shift as the semanticization of photography. Here, semanticization does not mean simply writing a photograph into a sentence; rather, it means translating photographs into comparable and retrievable forms of representation: labels such as seaside or wedding, automatically generated image descriptions, and vector representations used to compute similarity. A simple metaphor may help clarify what a vector is: the system assigns each photograph a coordinate on a semantic map. The closer two coordinates are, the more similar the photographs appear from the system's point of view, and the more readily they can recall one another across loops of search, recommendation, and generation. Photographic images thus gradually shift from single works for human viewing to semantic objects available for computational use.

This shift matters for photographic studies not because of its technical details, but because it rewrites how visibility and interpretive authority are distributed. Makers begin to anticipate what platforms can recognize more easily; viewers become accustomed to mobilizing images through keywords and questions; and platforms decide in advance, within the database itself, which photographs are worth seeing. As Daniel Palmer argues, within environments organized by software and networks, single photographs are increasingly folded into processes of aggregation, annotation, and reorganization, and their meaning and function depend ever more heavily on infrastructural processing[4,5].

On this basis, the article develops four questions. First, how do images move from polysemous visual objects to machine-readable and rankable semantic objects? Second, how do retrieval and prompting rewrite looking into a form of query-based seeing centered on asking, summarizing, and calling up images? Third, how do generative models alter the relation between photography and time, reality, and trace, such that documentary syntax and evidentiary force can no longer be guaranteed by appearance alone? Fourth, under conditions of expanding semanticization, how do misrecognition, blind spots, and embodied experiences such as haptic visuality remind us that perceptual residues still remain that cannot be fully archived? The basic argument advanced here is that once semanticization becomes infrastructural, the visibility, viewing, and memory of photography are often organized inside machines first, and the agency of both author and viewer must be rethought and redistributed under these new conditions.

2

From Image Polysemy to Machine Readability: The Infrastructuralization of Anchorage

In Image-Music-Text, Roland Barthes describes the image as an inherently polysemous sign: the same visual field may generate different, even contradictory, meanings, and interpretation slides among multiple possible paths. To prevent communication from stalling in polysemy, mass media often use language such as headlines and captions to fasten viewing to a legible route. This is what Barthes calls anchorage[6]. A simple example makes the point: the same photograph of a street crowd confronting police may be read as threatening if its caption says riot scene, but as an expression of rights if it says protest scene. Anchorage does not eliminate polysemy, but it makes one reading easier to accept as the default.

In traditional media, anchorage largely takes place in the foreground: headlines and captions are visible and contestable, and editorial choices are relatively explicit. After the deep intervention of platforms and artificial intelligence, anchorage has not disappeared, but it has increasingly moved into background processes. Images are first automatically recognized, labeled, and classified by systems, then folded into clustering, retrieval, and ranking. What users see first in a feed, which results appear first in a search, and which words a platform uses to summarize an image are often arranged in advance within the database rather than generated only at the moment of viewing. Anchorage thus shifts from words attached beside an image to rules written into infrastructure.

For this rule system to operate, images must first be translated into computable form. Vision-language models typically encode both images and text as vectors. A vector may be understood as an index card or a barcode: it is not a complete explanation of the image, but a summary representation that facilitates comparison. With such representations, systems can efficiently carry out two types of tasks: retrieval, by finding photographs closer to descriptions such as seaside sunset, cat, or wedding; and ranking, by placing results that better match a query or better satisfy platform goals closer to the top. Accordingly, when a photograph enters the system, the first question it faces is often not what it means, but which words it lies closest to in semantic space and what position it should occupy there.

Contrastive Language-Image Pretraining, or CLIP, is exemplary of this logic. It does not directly process the social context of photographs; instead, it frames training as a large-scale task of matching and ranking: among many candidate descriptions, the model must identify the sentence most likely to correspond to a given image and bring matched image-text pairs closer together in vector space[7]. This training is often implemented through contrastive objectives such as InfoNCE, whose intuitive meaning can be summarized as pulling correct pairs closer and pushing incorrect pairs farther apart[8]. Under such objectives, the model becomes especially good at compressing images into common, nameable features: recognizable subjects, typical actions, and familiar scenes. It is good at answering which description an image most resembles, but it does not guarantee coverage of all those elements of image experience that are difficult to articulate or name.

Anchorage thus acquires a new locus. It may no longer appear as a line of text beside a photograph, but rather as the image's position and score within similarity, classification, and ranking systems. Once that position is established, platforms amplify high-confidence, easily summarized, and operationally reusable features, and repeatedly reinforce them through search, recommendation, and automated summary. By contrast, ambiguity, privateness, personal association, and historical aftertones do not disappear, but they are more easily pushed into low-confidence and hard-to-classify regions from which systems cannot stably retrieve them. To be found under such a regime of visibility, image production is also reshaped in reverse: subjects become more prominent, images increasingly resemble a single keyword, and styles become easier to classify. In this sense, Palmer's redundant photograph takes on a new technical meaning: the independence of the single image yields to its function as a data sample, a metadata node, and a computable entry[4,5].

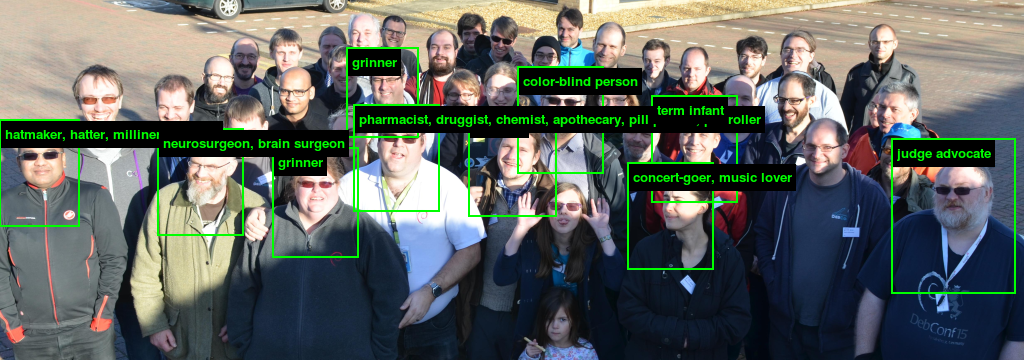

More importantly, once anchorage becomes infrastructural, it also imports existing taxonomies and biases into visual judgment. Kate Crawford and Trevor Paglen's ImageNet Roulette demonstrates the social consequence of this condition: when systems rely on inherited datasets and labeling schemes to classify portraits, terms inflected by class, gender, or race can be output as technical labels and thereby acquire an apparently neutral authority at the interface[9]. The issue here is not only whether labels are correct, but also where these labels come from, which categories are stabilized as common sense, which groups are more vulnerable to misrecognition, and how such misrecognition reshapes visibility, credibility, and the distribution of risk.

The violence of labeling, then, does not refer only to a few extreme errors. It also names an everyday filtering mechanism: systems preferentially extract and amplify what is easy to name, align, and retrieve, allowing images to circulate first as computable summaries. Images have not ceased to generate meaning, but which meanings can be recognized, distributed, and recalled must first pass through this filter. The polysemy of photography remains, yet it is increasingly reorganized into forms that machines can handle before it can enter visible and circulatory paths.

This is what it means for anchorage to become infrastructural: interpretation no longer adheres primarily beside the image, but is written into vector space and ranking rules that decide in advance what is more likely to be seen and how it will be described. Once anchorage exists in the form of ranking and retrieval, foreground viewing is more easily rewritten as a process of asking, filtering, and calling up.

3

Query-Based Seeing and the Reorganization of Attention

The image-text alignment and ranking discussed in the previous section largely take place in the background, where images are inserted into a semantic network that is searchable and comparable. Multimodal dialogue pushes this capacity into the foreground, making viewing increasingly unfold through the form of a question. In conversational AI interfaces that support image input, it has already become routine for users to send an image and then ask: What is this? Extract keywords for me. Does this photograph involve privacy risk? Write me a caption for posting. Here the image no longer merely waits to be gazed at and interpreted. It functions more like an entry point: around the same image, users keep asking questions, generating summaries, and rewriting descriptions, then connect the result directly to the next step in search, writing, generation, or decision-making.

This article calls such a mode of viewing, one that begins with questions and prompts and primarily produces reusable textual conclusions, query-based seeing. Its key feature is the priority of the question: viewing no longer begins from lingering and revisiting, but from a clear task such as recognition, summarization, comparison, filtering, risk assessment, or the generation of publishable wording. The image thus appears less as an object to behold than as material to be processed, while viewing itself increasingly resembles a delegable workflow.

At the level of system structure, multimodal dialogue usually passes through a conversion chain: the image is first compressed into feature representations by a visual encoder, then routed through a connector into a language model so that visual information can participate in reasoning and generation as conversational context. After instruction tuning, the system can do more than judge whether image and text match. It can generate answers, summaries, and operational suggestions concerning objects, scenes, text, relationships, and task goals[10]. In simpler terms, the system first stores the image as a summary and then translates that summary into a usable sentence according to the user's question. The same photograph is organized into different summaries under different prompts: ask what objects are in the picture and it foregrounds nameable subjects; ask whether the image is suitable for posting and it prioritizes faces, locations, and sensitive information. The prompt here is not only a question, but also a filter.

Query-based seeing, then, is not simply a faster way of looking at images. It changes the distribution of attention. Traditional viewing allows meaning to emerge through drift: the viewer may linger over a detail repeatedly or leave uncertainty within the image. Query-based seeing is more likely to specify an output in advance and then extract from the image those elements that can be stated, transcribed, and operationalized. Details that resist naming or compression into a sentence, such as subtle shifts of light at the edge of the frame, minute facial expressions, or ambiguous relational tension, do not disappear. Yet within an answer-first structure, they are more easily relegated to the status of background or noise. Viewing still takes place, but it is more frequently rewritten as the acquisition of a usable conclusion.

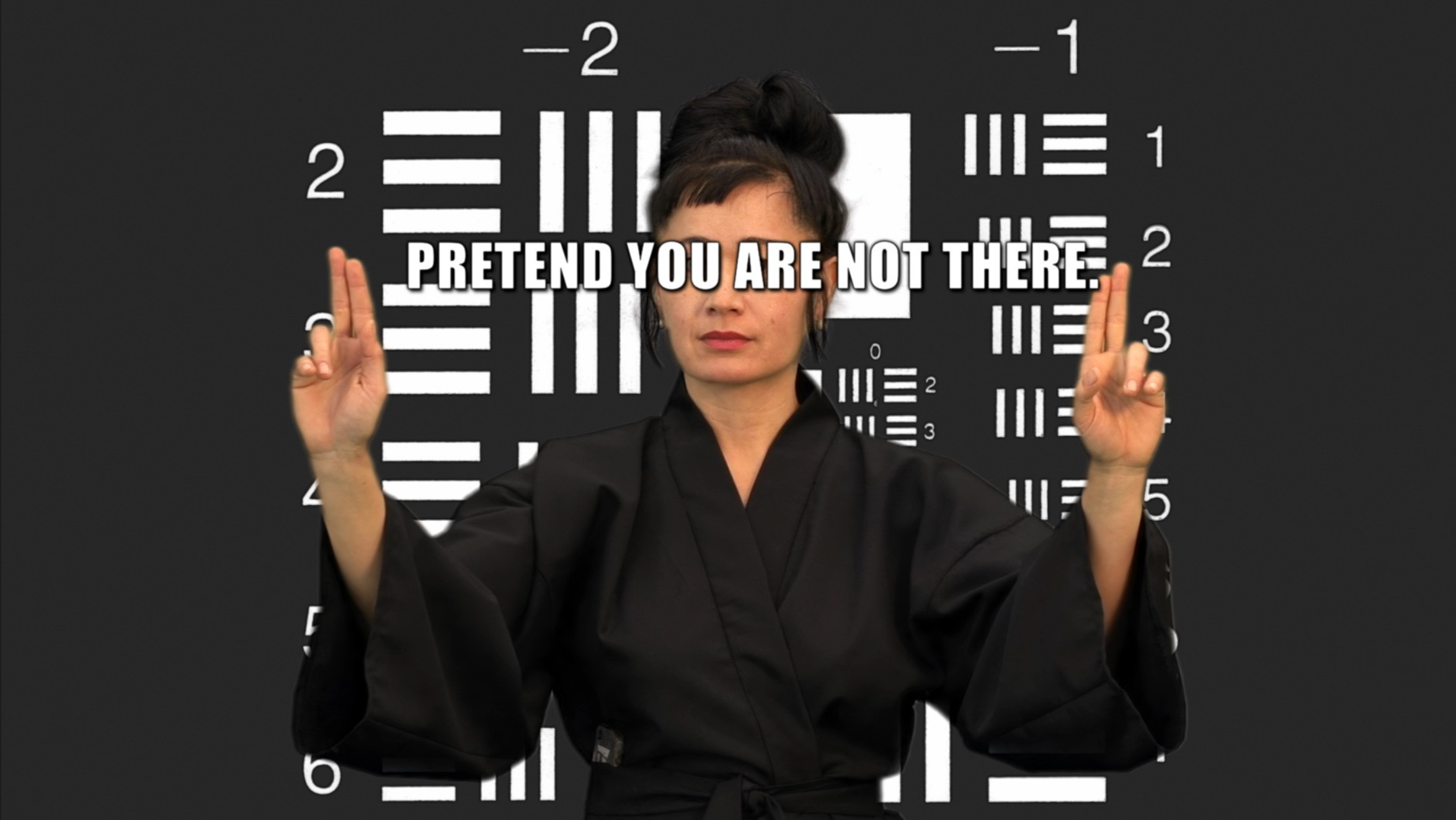

Slow looking has certainly not disappeared, but its place within dominant digital interfaces has been compressed. Platforms organize images through search, recommendation, clustering, and automatic summarization, making looking increasingly resemble searching and calling up. Palmer, discussing practices such as the image-sharing platform Flickr and the photo-aggregation project Photosynth, argues that software rewrites the value and visibility of photographs through metadata and algorithmic ranking. The more a photograph can be indexed, aggregated, and reorganized, the more readily it enters new circuits of circulation[4,5]. In How Not to Be Seen, Hito Steyerl satirizes the regime of digital visibility: in a system of high resolution and total recognition, presence is often bound to what can be parsed and indexed[11]. What cannot be measured does not necessarily vanish at once, but it more easily slides into low-weight regions of the system.

For photography, the structural effects of query-based seeing appear mainly on three levels. First, context is more easily deferred: the circumstances of shooting, the materiality of the medium, the mode of display, and the historical position of the image are often restored only after the answer has been generated. In most interfaces, the viewer first encounters subject recognition, style matching, and summary description. Second, the path of memory is rewritten: recollection depends less on accidental triggers and more on search terms, timelines, geolocation, and recommendation chains built from similar images. To remember a photograph increasingly resembles retrieving a result. Third, authorship undergoes a subtle displacement: photographers no longer only capture moments on site, but also anticipate in advance, during shooting and publishing, the clues needed for later retrieval, editing, and reuse, from titles and tags to subjects and styles that models can recognize consistently. The boundary between photography and metadata production thus becomes blurred.

This mode of seeing in turn reshapes image production itself. To be recognized, classified, and recommended, images tend to assume forms that are easier to describe: subjects stand out more strongly, compositions become more standardized, styles stabilize, and the image comes to resemble a keyword that can be summarized in one sentence. The rapid standardization of visual style on social platforms arises not only from aesthetic imitation, but also from adaptation to ranking and retrieval mechanisms. Photography certainly remains, but it increasingly appears embedded in a computation-first chain of distribution: processed by systems before it is seen by people. It is precisely along this chain that description begins to slide from summarizing photographs toward becoming generative instruction, thereby binding viewing and production more tightly together and preparing the ground for the next section's discussion of generative models.

4

From Trace to Probability: How Generative Models Rewrite Photography's Sense of Time

Photography occupies a special epistemological position in modern visual culture for one central reason: its physical link to what was once there. Whether in photojournalism, surveillance stills, or private snapshots, photographs are treated as evidence or triggers of memory because they are understood as optical traces of a moment. The shutter cuts continuous event-time into a slice that can be looked back upon. Barthes's repeated formulation in Camera Lucida, that-has-been, is built upon precisely this chain of trace[3]. Henri Cartier-Bresson's account of the decisive moment likewise depends on the same premise: the photographer is present, reality is in motion, and a crucial action and formal structure are sealed in a single instant[12].

What generative models alter is precisely this default binding between the photograph and event-time. Take diffusion models as an example. Rather than extracting a moment from external reality, they learn during training how to transform random noise step by step into something that looks like a certain type of image. During generation, under the constraint of prompts, they begin from noise and move toward a legible picture through multiple rounds of denoising. Latent diffusion models place this denoising process within compressed latent representations, allowing them to generate high-resolution images at lower computational cost[13]. For readers, the intuitive point is this: when one enters a prompt such as generate a black-and-white street photograph in the style of 1950s candid photography, the system is not returning to some scene in the 1950s to gather evidence; it is synthesizing a sample that conforms to the description on the basis of statistical regularities it has learned. The prompt therefore ceases to be merely a post hoc description of an image and becomes a condition of image production.

Image temporality is thereby reorganized. Photography corresponds to event-time: the shutter points to a moment that has already occurred, and to miss it is often to suffer an irreversible loss. Generated images, of course, also have time, but it is better understood as production time: how many denoising steps were taken, how many rounds the prompt was revised, and how many candidate outcomes were discarded and remade. To use an analogy internal to photography: one may make repeated test prints in the darkroom, but the negative still originates in the scene; generation, by contrast, brings the repeated testing to the front as the principal process, so that the image is premised from the outset on the possibility of being remade. Contingency does not disappear, but it comes more from parameters, sampling, and selection than from the world's own accidental instants.

Mario Klingemann's installation Memories of Passersby I makes this shift in temporality visible. The work continuously generates faces of people who never existed. The image keeps refreshing; no frame corresponds to a traceable past, and it is difficult to identify any one instant as the singular real moment[14]. What the work presents is not a preserved past but a present continuously emitted by the model: the image's presence comes from an ongoing computational process rather than a trace of the scene.

If Klingemann foregrounds continuous output, MT's The Honest Photographer returns the same problem to photography's most familiar syntax: a street photograph that seems to carry the aura of the decisive moment. The project first generates a still frame in an approximate Cartier-Bresson style, then builds around it multiple versions of story text and dialogic commentary, and further uses a video model to extend the image both backward and forward in time into different variants[15]. The still frame therefore ceases to be merely a conclusion and becomes a starting point that can be repeatedly overwritten: written into story, into commentary, and then into moving-image fragments before and after.

Within this structure, decisiveness shifts from the instant of the shutter to the selection among versions. Generation offers multiple candidate images, multiple narratives, and multiple temporal extensions, and the work must ultimately choose among them: which image to select, which narrative to adopt, and which temporal continuation to retain. Once a frame is selected, the work treats it as the moment; once an extension is selected, the still image is inserted into a corresponding before-and-after relation. More importantly, the project presents the human-machine dialogue and the production materials together, so that these choices are no longer hidden in the background but become available for viewing and discussion. Here authorship is expressed less through one-time presence at the scene than through version management and selection.

Placed side by side, these two practices point to a broader consequence: the appearance of photographs is becoming less and less able, on its own, to guarantee a relation to traces of the scene. Images that look documentary may be synthetic; physically shot negatives may also be supplemented and extended afterward through generative logic, and these differences are often not obvious at the level of interface. The problem, then, is not only whether an image is true, but more concretely how production chains and responsibilities are distributed. Does the image's temporality derive from an event or from a sampling procedure? By what mechanisms is its evidentiary force secured? Where do author, platform, model, and viewer each intervene, and where do they bear responsibility? The more an image can be described in language, rewritten by prompts, and repeatedly invoked by models, the less the boundary of photography can be determined by the optical scene alone. If generation renders trace unreliable, then another equally important question within a semanticized regime is where machines missee and overlook, and how these blind spots might be turned back against the system.

5

Misrecognition, Blind Spots, and the Reverse Use of Visibility

The previous section argued that generative models make traces unreliable. Equally important, however, is that semanticized systems do not always see correctly. The semanticization of photography may appear to be an ever-expanding apparatus of capture, but it is far from seamless. The more systems depend on statistical norms, the more likely they are to falter in the face of anomalies, ambiguity, and boundary cases. Everyday discussion often lumps such failures together under the term machine hallucination, but analytical precision requires distinguishing among their mechanisms, because different failures correspond to different technical premises, social consequences, and politics of visibility.

This article provisionally divides such failures into three categories. The first is misrecognition: the system places an image into the wrong category, or overgeneralizes a category onto an unsuitable object. Such errors often occur when image information is insufficient, through occlusion, low resolution, or severe glare, or when category boundaries are themselves vague, as with value-laden labels such as dangerous, suspicious, or indecent. Their consequences commonly appear as mismatched labels, erroneous ranking, and unpredictable penalties in visibility. The second is adversarial misdirection: the input does not alter human intuitive judgment, yet minor perturbations can drastically redirect the model's decision. This reminds us that visibility does not derive simply from image content, but from how the model reads and computes within a particular representational space. The third is generative confabulation: when information is insufficient or uncertain, the system still tends to provide a coherent answer and treats probable detail as settled fact. As image description, visual question answering, and summary become routine interface functions, this tendency deserves particular caution, because it disguises uncertainty as certainty and inserts it into communication and decision-making in textual form.

Shinseungback Kimyonghun's Cloud Face vividly reveals the classificatory inertia behind recognition error. The work points a facial-recognition system at clouds, and the machine continuously discovers faces in amorphous textures[16]. Such misrecognition is not merely an accidental mistake; it exposes an impatient drive to name. When a system is designed so that it must output what something is, it will often prefer misjudgment in the midst of chaos to leaving a flowing form outside a ready-made category template. In other words, machine seeing is not equivalent to neutral recording, but is a judgment pre-shaped by category libraries and threshold strategies.

Once our focus shifts from misrecognition to blind spots, the problem becomes more specific. A blind spot is not merely what the system cannot see; it concerns the conditions under which the system chooses not to look, cannot look, or refuses to admit that it has not seen clearly. Which inputs are marked as low confidence, outliers, or noise? Which objects are excluded from retrieval and recommendation because usable labels are unavailable? Which contextual dimensions, such as the social relation of the shot, relations of power, or emotional atmosphere, are treated as irrelevant in representational conversion? Blind spots are thus structural products, generated by the scope of classification systems, the distribution of training samples, and platforms' rigid demand for usable output.

Blind spots also offer art and critical practice a reverse point of entry. Strategies such as blur, occlusion, overlay, abnormal composition, and stylized treatment do not simply make images harder to recognize. They force the system to expose its own boundaries and preferences: which standardized samples it depends on, which unclassifiable objects it penalizes, and how it narrows the range of the visible in the name of efficiency and accuracy. It should be emphasized that such cracks can become openings for critique, but they can also generate new exclusions and injuries. Reverse use is therefore not a simple triumphalist narrative; it is a practical condition that demands careful discussion.

More generally, blind spots remind us that semanticization cannot cover all experience, and that machine visibility is not equivalent to visual experience itself. The further the semanticization of photography advances, the more necessary it becomes to attend to what the system marks as noise, low confidence, or invalid information. These elements are not merely residues; at times they become the very point of entry for understanding image politics and the regime of visibility. Yet photography's remainder does not exist only where systems fail. It also arises from embodied viewing and lived experience themselves. The next section turns to this dimension, which is even more resistant to semanticization.

6

What Remains Beyond Language: Haptic Visuality and Embodied Viewing

The previous section used misrecognition and blind spots as points of entry in order to show that the regime of semanticization is not transparent. Even when the system does not missee, it often produces only an operational summary and cannot encompass those modes of viewing that precede language and depend on the body. Semanticization translates images into keywords, labels, and descriptions, making them easier to retrieve and reproduce; at the same time, some experiences are flattened in the process of translation. The key question thus becomes: under conditions of expanding semanticization, do some dimensions of image experience still inevitably slip away from naming and archiving?

Laura U. Marks proposes the notion of haptic visuality to describe a mode of looking that stays close to the surface. When grain, texture, blur, contrast, and fluctuations of light are foregrounded, the gaze does not rush to confirm what something is, but moves slowly across the image as if touching its material surface[17,18]. In photographic experience, this kind of viewing often emerges in low-light, out-of-focus, darkened, or heavily grained images. A system may summarize them as blurry, noisy, or underexposed, but for viewers these so-called defects are often precisely the channels through which feeling enters. If an old family photograph has grayed edges and lost detail, we may not first identify the figures; we may instead first be seized by the trace of time, the texture of the paper, and the softness of the light.

In discussing photography's empathic mechanism, Wei Wangkai emphasizes that images can act before narrative: they first draw a person into an emotional texture, and only afterward does explanation slowly catch up[19]. Haptic viewing aptly illustrates this sequence of feeling first and naming later. When viewing begins from feeling, context is not abolished so much as delayed. By contrast, automated captions and keyword summaries are better at converting images into reusable conclusions: they preserve nameable subjects and actions first, while compressing surface texture into a background property. The more smoothly a photograph is summarized by the system, the more likely it is to lose the tactile layer that encourages human lingering.

Within this context, Barthes's punctum also becomes easier to locate. Punctum is not a stable feature of the object, but a displacement produced when a detail collides accidentally with a viewer's lived experience[3]. It may be a fold in a collar, the gesture of a hand, or an unremarkable object in a corner. Statistically, such details may not matter much, yet they can suddenly draw the viewer into private memory. Algorithms are better at handling commonality and frequent description, and therefore more likely to foreground what type of photograph this is or what objects it contains. They are far less capable of knowing in advance which detail will be activated by whose life experience. Punctum therefore constitutes a remainder that resists full semanticization: it is not a matter of insufficient information, but of meaning generation depending on a particular person.

Semanticization has already become part of the image regime, and viewing practice has thereby become one of the few elements that can still be rearranged. Slow looking, repeated looking, misreading, material analysis, bodily movement through exhibition space, and long-duration engagement with the image surface do not aim at efficient operational use. Yet they allow image experience to regain texture and preserve room for empathy and reflection. Algorithms can assist us in extraction and archiving, but they can hardly replace the human capacity to linger in uncertainty, to be affected, and then to return and look again. So long as such lingering remains, photography will not be reducible to labels, vectors, and instructions alone.

7

Conclusion: Photography Has Not Ended, but Its Regime Has Changed

This article has used the concept of the semanticization of photography to describe an emerging institutional transformation: photographic images are pre-labeled, embedded, and summarized within the processing chains of platforms and multimodal models, and are then called up as semantic objects within loops of retrieval, recommendation, and generation. As a result, the visibility, interpretability, and memorability of images are increasingly organized inside machines before they are decided at the scene of viewing.

Three lines of argument support this claim. First, Barthes's discussion of polysemy and anchorage suggests that when anchorage shifts from titles and captions into vector spaces and ranking rules, the center of interpretive authority shifts with it[6]. Second, query-based seeing rewrites looking into a task flow centered on asking and calling up, with prompts functioning as a new filtering frame that compresses images more quickly into reusable conclusions. Third, diffusion models and related generative mechanisms push photography's temporal logic from event slices toward version selection, making appearance alone unable to guarantee a relation to traces of the scene[13].

These changes do not mean that photography has failed; rather, they mean that its functions are increasingly being redefined within networked software environments. Palmer's notion of redundancy can be understood here as an infrastructural condition: the value of a single photograph no longer derives primarily from its self-sufficiency as an isolated work, but from its indexability, matchability, and reusability within the database[4,5]. Photography is therefore no longer simply a matter of taking the world down as an image; it increasingly provides material that can be called upon for retrieval, ranking, and generation.

For image humanities and photographic criticism, this requires us to expand the unit of analysis from the triangle of work, author, and viewer to the larger configuration of pipeline, interface, and institution. To discuss a photograph today is not only to ask what it presents, but also how it has been labeled, retrieved, ranked, and summarized, and how it enters circuits of training and generation. Archives, pedagogy, and curatorial practice likewise need to renew their problematics: distinguishing the production chains of trace-based and synthetic images, recording verifiable provenance and processes of handling, and cultivating a critical literacy toward metadata and model-generated summaries.

At the same time, this article insists that semanticization cannot exhaust image experience. Misrecognition and blind spots reveal the boundaries of classification systems, while haptic visuality and punctum remind us that viewing still includes embodied lingering, contingent affect, and forms of meaning generation that cannot be fully anticipated[3,17,18]. From the perspective of Vilém Flusser's theory of apparatus, the crucial issue is not whether to reject the apparatus, but how to understand the possibilities it prestructures and how to reorganize within it the agency of both author and viewer[20].

In sum, photography has not ended, but its regime is being reorganized: from one centered on optical witnessing to one centered on computation, retrieval, and operational reuse. Any adequate analysis of contemporary photography must therefore confront the new visibility, new seeing, and new memory produced by this infrastructure, while also preserving room for those experiences that remain resistant to semanticization.

References

References

- [1] Benjamin, W. (1969). The work of art in the age of mechanical reproduction. In H. Arendt (Ed.), Illuminations (H. Zohn, Trans., pp. 217--251). New York, NY: Schocken Books.

- [2] Sontag, S. (1977). On photography. New York, NY: Farrar, Straus and Giroux.

- [3] Barthes, R. (1980). Camera lucida: Reflections on photography (R. Howard, Trans.). New York, NY: Hill and Wang.

- [4] Palmer, D. (2013). Redundant photographs: Cameras, software and human obsolescence. In D. Rubinstein, J. Golding, & A. Fisher (Eds.), On the verge of photography: Imaging beyond representation (pp. 149--166). Birmingham, England: ARTicle Press.

- [5] Palmer, D. (2026). Redundant photographs: Cameras, software, and human obsolescence (Q. Chen, Trans.). Chinese Photography, (2).

- [6] Barthes, R. (1977). Image-music-text (S. Heath, Trans.). London, England: Fontana Press.

- [7] Radford, A., Kim, J. W., Hallacy, C., et al. (2021). Learning transferable visual models from natural language supervision. In Proceedings of the 38th International Conference on Machine Learning (pp. 8748--8763).

- [8] van den Oord, A., Li, Y., & Vinyals, O. (2018). Representation learning with contrastive predictive coding. arXiv. https://arxiv.org/abs/1807.03748.

- [9] Crawford, K., & Paglen, T. (2019). ImageNet Roulette [Artwork].

- [10] Liu, H., Li, C., Wu, Q., et al. (2023). Visual instruction tuning. arXiv. https://arxiv.org/abs/2304.08485.

- [11] Steyerl, H. (2013). How not to be seen: A fucking didactic educational .MOV file [Video artwork].

- [12] Cartier-Bresson, H. (1952). The decisive moment. New York, NY: Simon and Schuster.

- [13] Rombach, R., Blattmann, A., Lorenz, D., et al. (2022). High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 10684--10695).

- [14] Klingemann, M. (2018). Memories of Passersby I [Artwork].

- [15] MT. (2024). The Honest Photographer [Online artwork]. https://mt-zeng.com/the-honest-photographer.

- [16] Shinseungback Kimyonghun. (2012). Cloud Face [Artwork].

- [17] Marks, L. U. (1998). Video haptics and erotics. Screen, 39(4), 331--348.

- [18] Marks, L. U. (2000). The skin of the film: Intercultural cinema, embodiment, and the senses. Durham, NC: Duke University Press.

- [19] Wei, W. (2026). From viewing to being seen: Photography as a stable channel of empathy. Chinese Photography, (2).

- [20] Flusser, V. (2000). Towards a philosophy of photography (A. Mathews, Trans.). London, England: Reaktion Books.